Unify • Simplify • Amplify

Traditional data engineering is broken.

Enterprises spend millions manually wiring databases, ETL pipelines, semantic layers, and APIs — only to create fragile systems, constant rework, and compounding technical debt.

Stop coding your data stack. Generate it.

Data unified across three major systems in ten days; 50 new business processes; integrated with inventory hardware; warehouse management system finally unjammed.

IoT data platform built for ML/AI; v1.0 in three weeks; corrects anomalies across IoT data; unified with external and internal datasets; deep new meaning in source data; scalable.

Entire operation unified into a single real-time system; live in production within 90 days; inventory, logistics, and reporting synchronised without integration overhead or technical debt.

Why Occam?

A single, governed model keeps every system aligned — ERP, CRM, finance, HR, analytics, and AI — removing drift, rework, and technical debt as the business changes.

“Data engineering is largely unchanged since the relational database was commercialised in 1979 — and it’s long overdue for a fundamental shift. Everything IT has done in data engineering so far has been a prototype. It’s time for Data 2.0.”

Occam Founder, Steven MacLeod

The Occam Model

Most platforms assemble systems, then manage the data.

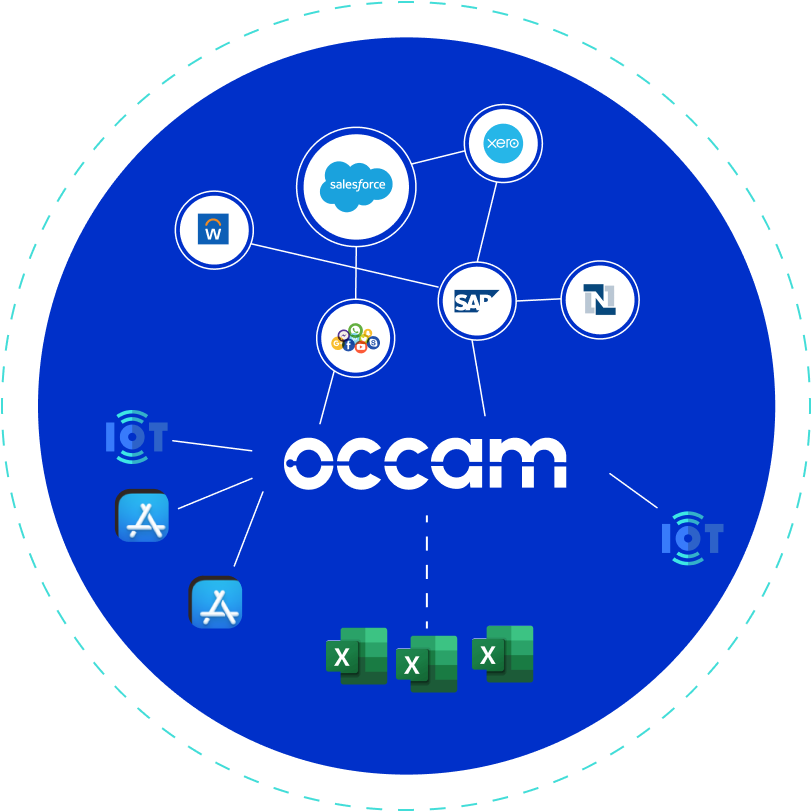

Occam defines the logic, then compiles the system — enabling orders-of-magnitude faster deployment at a fraction of the cost.

Occam works as a force multiplier by reducing the engineering effort required to build and maintain complex data systems.

The Occam platform

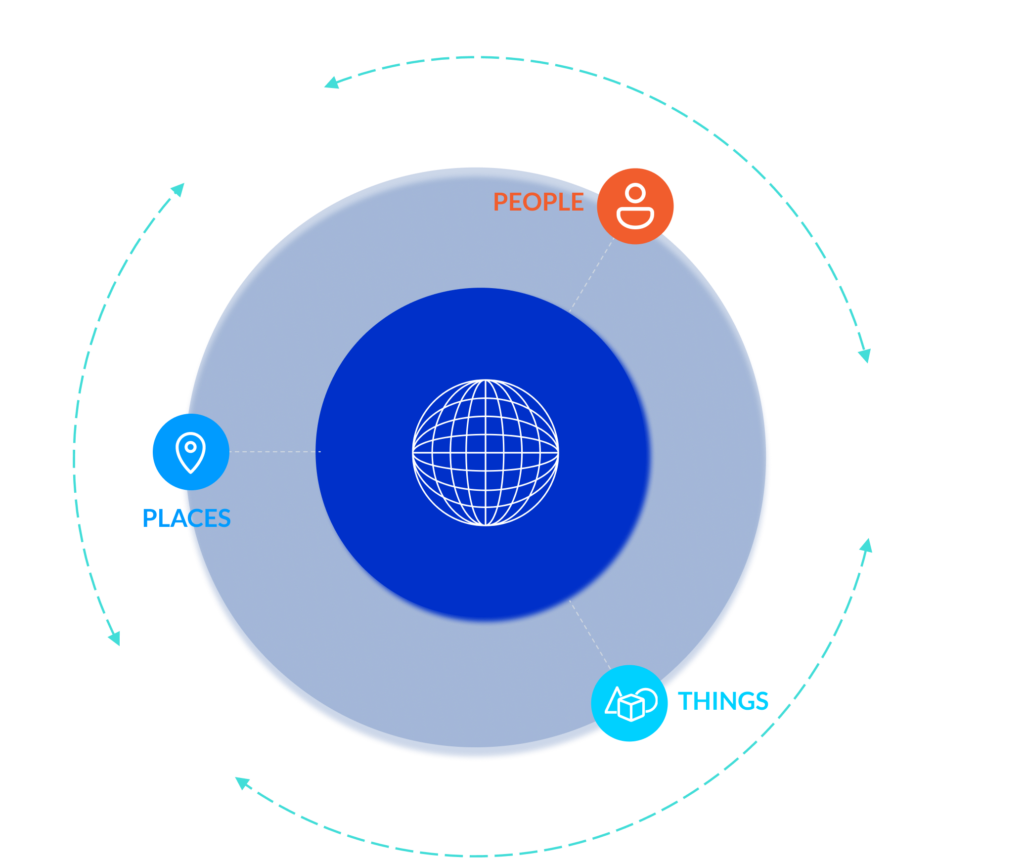

Occam is a self-generating, ontology-native data platform.

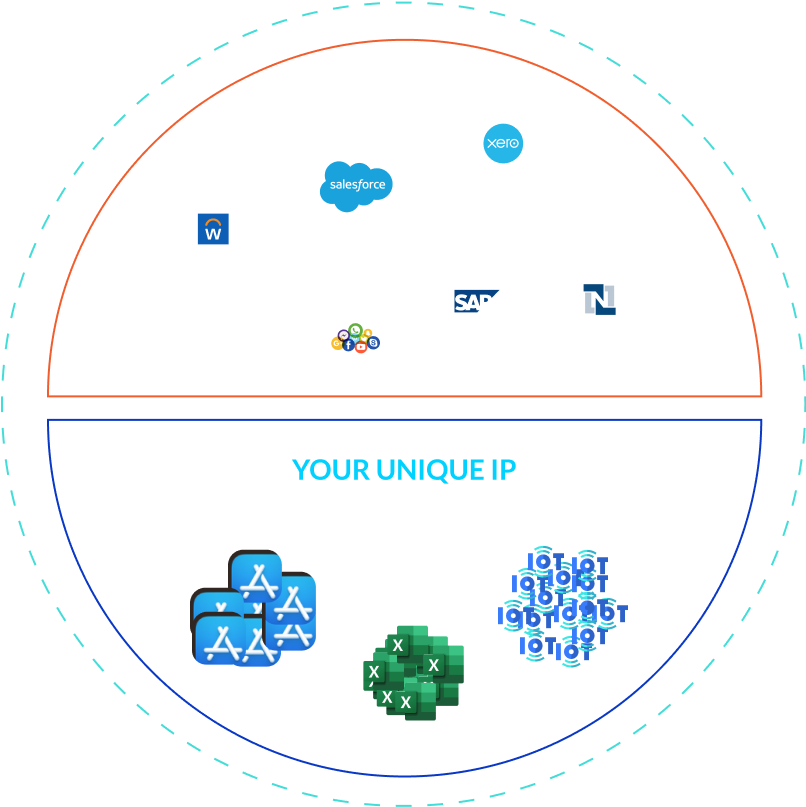

Instead of coding systems and fixing data later, Occam defines business logic once — as executable metadata — and compiles the entire operational and analytical system automatically.

This keeps data consistent, governed, and adaptable as the business changes.

Occam replaces fragmented databases, spreadsheets, and siloed applications with a single, governed model — providing real-time visibility, compliance, and confidence at scale.

Business rules live in the ontology, not buried in code.

Changes are applied once and recompiled instantly across every system — without migrations or rewrites.

AI depends on clean, complete, and coherent data.

Occam provides a deterministic foundation AI can trust — without semantic patching or manual alignment.

This replaces manual database design, ETL pipelines, and semantic layers with deterministic system generation.

Zero ETL

The pipeline is invisible and auto-generated from the ontology.

Zero Drift

Operational and analytical data are mathematically identical.

Zero Technical Debt

When business logic changes, the system recompiles instantly — no rewrites, no migrations.

3 min read · Oct 08, 2025

Data 2.0 and the Pinch Point the Industry Can’t Escape

Steven MacLeod has a stark message: Data 2.0 is coming, and it will trigger one of the biggest structural shifts the industry has ever faced. Read on!

3 min read · Sept 29, 2025

Why We Need to Move to Data 2.0

At the recent DataEngBytes conference in Sydney, most of the 500 people in the room were talking about how to add agentic AI to their stack. Occam founder Steven MacLeod was one of the few who didn’t…

3 min read · Aug 20, 2025

At Last We Get to Talk About AI

Our final open letter to New Zealand’s Parliamentary Commissioner for the Environment addressing the proverbial elephant in the room — AI